2 RNN

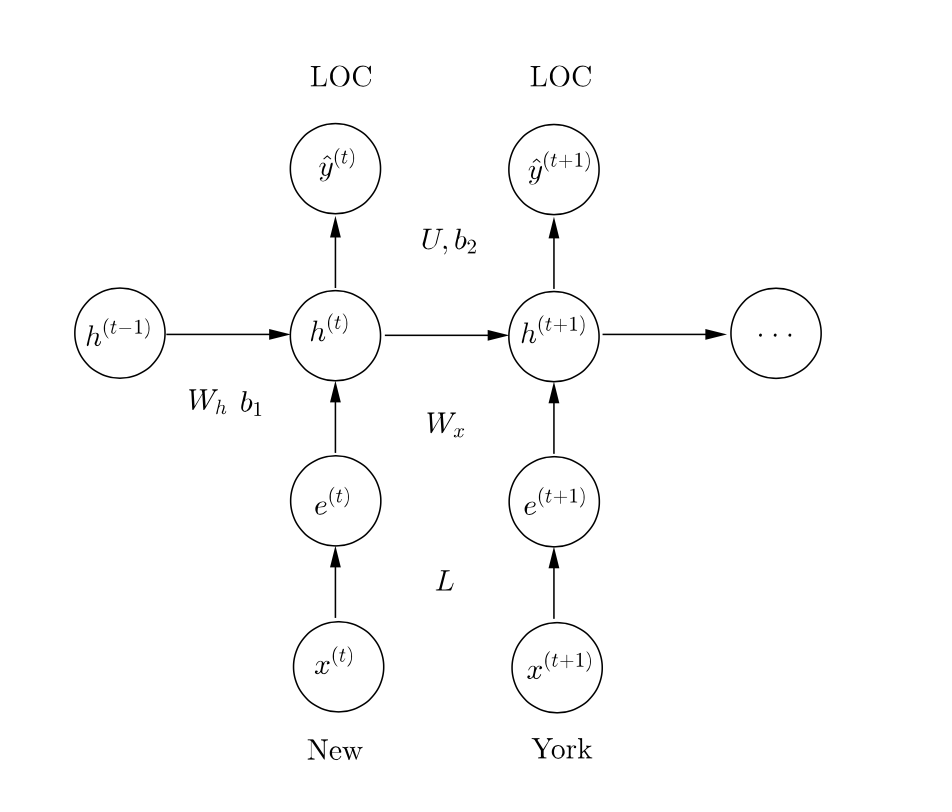

现在用RNN来解决这个问题。

$$\begin{align}

%

\boldsymbol{e}^{(t)} &= \boldsymbol{x}^{(t)} L \nonumber \\

%

\boldsymbol{h}^{(t)} &= \sigma \left( \boldsymbol{h}^{(t-1)} W_{h} + \boldsymbol{e}^{(t)} W_{x} + \boldsymbol{b}_{1} \right) \nonumber \\

%

\hat{\boldsymbol{y}}^{(t)} &= \text{softmax} \left( \boldsymbol{h}^{(t)} U + \boldsymbol{b}_{2} \right) \text{ , } \nonumber

%

\end{align}$$

其中$L \in \mathbb{R}^{V \times D}$ 是词嵌入矩阵, $W_{h} \in \mathbb{R}^{H \times H}$, $W_{x} \in \mathbb{R}^{D \times H}$, 和 $\boldsymbol{b}_{1} \in \mathbb{R}^{H}$ RNN cell的参数, $U \in \mathbb{R}^{H \times C}$ 和 $\boldsymbol{b}_{2} \in \mathbb{R}^{C}$ 是 softmax 的参数。 $V$ 是词表大小, $D$ 是词嵌入维度, $H$ 是隐藏层单元数, $C$ 是分类数(这里是5)。

a RNN的参数比基线模型多多少?

多$(H \times H) - (2w \times D \times H)$,因为多了一个隐藏状态转移矩阵$W_{h} \in \mathbb{R}^{H \times H}$,而输入到隐藏单元的矩阵不再是$W \in \mathbb{R}^{(2w + 1) \times DH}$ ,而是$W_{x} \in \mathbb{R}^{D \times H}$。

计算复杂度

还是输入到隐藏层、隐藏层到隐藏层的复杂度最高,总和为:

$O\left((D+H)HT\right)$

b 损失函数与$F_1$

举一个减小损失函数可能会降低$F_1$的场景。

对于正确答案

The James/MISC scandal/MISC

预测

The James/MISC scandal/O

比

The James/O scandal/O

的损失函数要小,因为预测正确非O标签多了一个。但是entity级别$F_1$反而会下降,因为预测除了一个错误的$F_1$。

为什么难以针对$F_1$做优化呢?

$F_1$不可导,需要在整个语料上进行计算,使其无法batch和并行化。

c 实现RNN单元

继承了TensorFlow的RNNCell,覆写:

def __call__(self, inputs, state, scope=None):

"""Updates the state using the previous @state and @inputs.

Remember the RNN equations are:

h_t = sigmoid(x_t W_x + h_{t-1} W_h + b)

TODO: In the code below, implement an RNN cell using @inputs

(x_t above) and the state (h_{t-1} above).

- Define W_x, W_h, b to be variables of the apporiate shape

using the `tf.get_variable' functions. Make sure you use

the names "W_x", "W_h" and "b"!

- Compute @new_state (h_t) defined above

Tips:

- Remember to initialize your matrices using the xavier

initialization as before.

Args:

inputs: is the input vector of size [None, self.input_size]

state: is the previous state vector of size [None, self.state_size]

scope: is the name of the scope to be used when defining the variables inside.

Returns:

a pair of the output vector and the new state vector.

"""

scope = scope or type(self).__name__

# It's always a good idea to scope variables in functions lest they

# be defined elsewhere!

with tf.variable_scope(scope):

### YOUR CODE HERE (~6-10 lines)

W_x = tf.get_variable('W_x',shape=(self.input_size,self._state_size),dtype=tf.float32,

initializer=tf.contrib.layers.xavier_initializer())

W_h = tf.get_variable('W_h', shape = (self._state_size,self._state_size),dtype=tf.float32,

initializer=tf.contrib.layers.xavier_initializer())

b = tf.get_variable('b', shape = (self._state_size),dtype=tf.float32,

initializer = tf.contrib.layers.xavier_initializer())

new_state = tf.nn.sigmoid(tf.matmul(state,W_h)+tf.matmul(inputs,W_x)+b)

### END YOUR CODE ###

# For an RNN , the output and state are the same (N.B. this

# isn't true for an LSTM, though we aren't using one of those in

# our assignment)

output = new_state

return output, new_state

d RNN补零

实现RNN需要在整个句子上unroll,但句子的长度是不定的。一个解决方法是按最长的句子长度补零对齐。

假设最长句子长 $M$ ,对长 $T$ 的句子需要

1、为$x$和$y$补零向量,这些“零向量”依然是one-hot向量,代表NULL。

1、创建遮罩向量$\left( m^{(t)} \right)_{t=1}^{M}$,对所有$t \leq T$是1,对所有$t > T$是0。

3、在补充了 $M-T$ 个token之后,损失和梯度都要做相应改动:

$$\begin{align}

%

J &= \sum_{t=1}^{M} m^{(t)} \text{CE} \left( y^{(t)} , \hat{y}^{(t)} \right) \nonumber

%

\end{align}$$

如果不改动损失函数,会产生什么结果?

误差将来自补零的部分,影响参数学习。通过遮罩向量去掉它们可以解决这个问题。

补零的实现:

def pad_sequences(data, max_length): """Ensures each input-output seqeunce pair in @data is of length @max_length by padding it with zeros and truncating the rest of the sequence. TODO: In the code below, for every sentence, labels pair in @data, (a) create a new sentence which appends zero feature vectors until the sentence is of length @max_length. If the sentence is longer than @max_length, simply truncate the sentence to be @max_length long. (b) create a new label sequence similarly. (c) create a _masking_ sequence that has a True wherever there was a token in the original sequence, and a False for every padded input. Example: for the (sentence, labels) pair: [[4,1], [6,0], [7,0]], [1, 0, 0], and max_length = 5, we would construct - a new sentence: [[4,1], [6,0], [7,0], [0,0], [0,0]] - a new label seqeunce: [1, 0, 0, 4, 4], and - a masking seqeunce: [True, True, True, False, False]. Args: data: is a list of (sentence, labels) tuples. @sentence is a list containing the words in the sentence and @label is a list of output labels. Each word is itself a list of @n_features features. For example, the sentence "Chris Manning is amazing" and labels "PER PER O O" would become ([[1,9], [2,9], [3,8], [4,8]], [1, 1, 4, 4]). Here "Chris" the word has been featurized as "[1, 9]", and "[1, 1, 4, 4]" is the list of labels. max_length: the desired length for all input/output sequences. Returns: a new list of data points of the structure (sentence', labels', mask). Each of sentence', labels' and mask are of length @max_length. See the example above for more details. """ ret = [] # Use this zero vector when padding sequences. zero_vector = [0] * Config.n_features zero_label = 4 # corresponds to the 'O' tag for sentence, labels in data: ### YOUR CODE HERE (~4-6 lines) padN = max(max_length-len(sentence),0) sentence = sentence[0:max_length-padN]+[zero_vector]*padN labels = labels[0:max_length-padN]+ [zero_label]*(padN) mask = [True]*(max_length-padN) + [False]*padN ret.append((sentence,labels,mask)) ### END YOUR CODE ### return ret

e 实现RNN

要求达到85%的$F_1$分值。

依然只贴最重要的:

def add_prediction_op(self):

"""Adds the unrolled RNN:

h_0 = 0

for t in 1 to T:

o_t, h_t = cell(x_t, h_{t-1})

o_drop_t = Dropout(o_t, dropout_rate)

y_t = o_drop_t U + b_2

TODO: There a quite a few things you'll need to do in this function:

- Define the variables U, b_2.

- Define the vector h as a constant and inititalize it with

zeros. See tf.zeros and tf.shape for information on how

to initialize this variable to be of the right shape.

https://www.tensorflow.org/api_docs/python/constant_op/constant_value_tensors#zeros

https://www.tensorflow.org/api_docs/python/array_ops/shapes_and_shaping#shape

- In a for loop, begin to unroll the RNN sequence. Collect

the predictions in a list.

- When unrolling the loop, from the second iteration

onwards, you will HAVE to call

tf.get_variable_scope().reuse_variables() so that you do

not create new variables in the RNN cell.

See https://www.tensorflow.org/versions/master/how_tos/variable_scope/

- Concatenate and reshape the predictions into a predictions

tensor.

Hint: You will find the function tf.pack (similar to np.asarray)

useful to assemble a list of tensors into a larger tensor.

https://www.tensorflow.org/api_docs/python/array_ops/slicing_and_joining#pack

Hint: You will find the function tf.transpose and the perms

argument useful to shuffle the indices of the tensor.

https://www.tensorflow.org/api_docs/python/array_ops/slicing_and_joining#transpose

Remember:

* Use the xavier initilization for matrices.

* Note that tf.nn.dropout takes the keep probability (1 - p_drop) as an argument.

The keep probability should be set to the value of self.dropout_placeholder

Returns:

pred: tf.Tensor of shape (batch_size, max_length, n_classes)

"""

x = self.add_embedding()

dropout_rate = self.dropout_placeholder

preds = [] # Predicted output at each timestep should go here!

# Use the cell defined below. For Q2, we will just be using the

# RNNCell you defined, but for Q3, we will run this code again

# with a GRU cell!

if self.config.cell == "rnn":

cell = RNNCell(Config.n_features * Config.embed_size, Config.hidden_size)

elif self.config.cell == "gru":

cell = GRUCell(Config.n_features * Config.embed_size, Config.hidden_size)

else:

raise ValueError("Unsuppported cell type: " + self.config.cell)

# Define U and b2 as variables.

# Initialize state as vector of zeros.

### YOUR CODE HERE (~4-6 lines)

U = tf.get_variable('U', shape = (Config.hidden_size,Config.n_classes),

dtype=tf.float32,initializer = tf.contrib.layers.xavier_initializer())

b2 = tf.get_variable('b2', shape = (Config.n_classes),

dtype=tf.float32,initializer=tf.constant_initializer(0.))

h = tf.zeros(shape= (tf.shape(x)[0],Config.hidden_size))

### END YOUR CODE

with tf.variable_scope("RNN"):

for time_step in range(self.max_length):

### YOUR CODE HERE (~6-10 lines)

if time_step>0:

tf.get_variable_scope().reuse_variables()

o_t,h = cell(x[:,time_step,:],h)

o_drop_t = tf.nn.dropout(o_t, dropout_rate)

y_t = tf.matmul(o_drop_t,U)+ b2 # batch_size,n_classes

preds.append(y_t)

### END YOUR CODE

# Make sure to reshape @preds here.

### YOUR CODE HERE (~2-4 lines)

preds = tf.stack(preds,axis=0) # max_length,batch_size,n_classes

preds = tf.transpose(preds,perm=[1,0,2]) # batch_size,max_length,n_classes, same as following

# preds = tf.reshape(preds,shape=(-1,Config.max_length, Config.n_classes)) # max_length,n_classes

### END YOUR CODE

assert preds.get_shape().as_list() == [None, self.max_length, self.config.n_classes], "predictions are not of the right shape. Expected {}, got {}".format([None, self.max_length, self.config.n_classes], preds.get_shape().as_list())

return preds

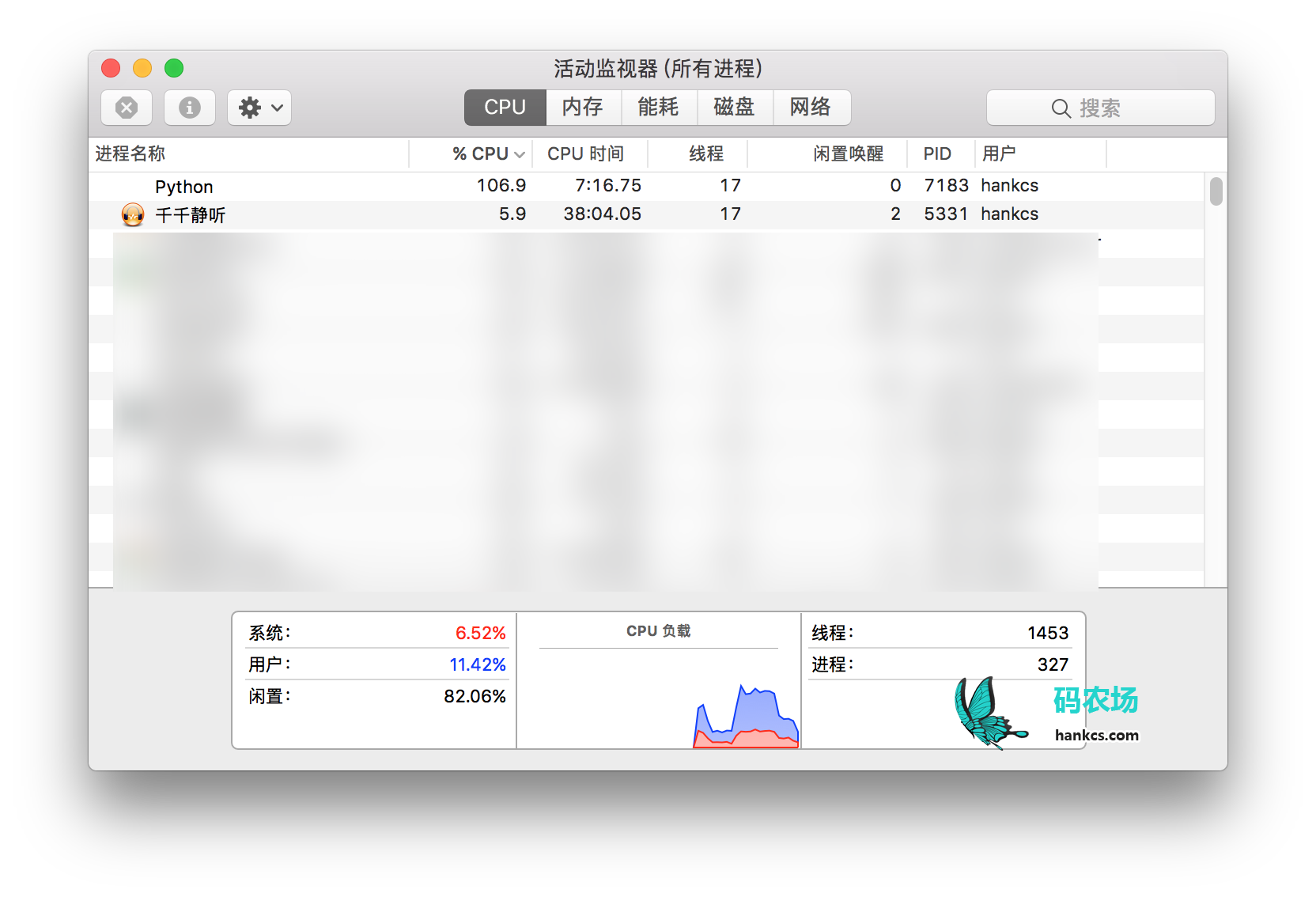

f 训练

讲义上说CPU要跑两个小时,GPU要10-20分钟,但我的MBP CPU上只跑十来分钟就出结果了。

DEBUG:Token-level confusion matrix: go\gu PER ORG LOC MISC O PER 2987 32 47 12 71 ORG 136 1684 90 70 112 LOC 39 83 1907 21 44 MISC 43 45 47 1031 102 O 36 56 15 34 42618 DEBUG:Token-level scores: label acc prec rec f1 PER 0.99 0.92 0.95 0.93 ORG 0.99 0.89 0.80 0.84 LOC 0.99 0.91 0.91 0.91 MISC 0.99 0.88 0.81 0.85 O 0.99 0.99 1.00 0.99 micro 0.99 0.98 0.98 0.98 macro 0.99 0.92 0.89 0.91 not-O 0.99 0.90 0.88 0.89 INFO:Entity level P/R/F1: 0.85/0.86/0.85

实际上还不如Window=2的基线模型。

g 缺点

这个朴素的RNN无法利用未来的特征来辅助当前决策,比如New York State University中的New容易被打上LOC标签,如果是biRNN则可能解决问题。另外模型也没有强制相邻token标签的连续性,solution中说引入 pair-wise agreements (i.e. using a CRF loss)可以解决这个问题。

码农场

码农场

q3_gru.py add_prediction_op() 中dynamic_rnn应该取output而不是state吧?

outputs, state = tf.nn.dynamic_rnn(cell, x, dtype=tf.float32)

output = outputs[:, -1]

preds = tf.sigmoid(output)

代码提示里有写“returns the final state as a prediction”

3 GRU–a latch–ii这道题目的最后一种情况,即h(t−1)=1, x(t)=1,为何h~(t)必须为0?是否可以是这种情况:h~(t)=1, 而z(t)=0,仍然可以使h(t)=1。

博主请抽空指点一下,谢谢

3 GRU a latch ii这个题的最后一种情况,当x_t=h_t=1时,h~t为什么只能为0?h~t和z_t同为1,h_t也能为1,对不对?楼主能抽空答疑一下吗,谢谢。

谢谢博主= =!

因为用的win10,作业要求用python2.7,但没有对应的tensorFlow版本….(也是醉了)

全靠您的代码,我才可以校对

很谢谢