课上讲的太简略了,原理参考《word2vec原理推导与代码分析》。谷歌给的代码也很简陋,只有负采样,没有哈夫曼树。另外单机word2vec已经那么高效了,我质疑上TF的意义。

任务 5: Word2Vec&CBOW

这次的任务是在text8语料上训练Word2Vec skip-gram和CBOW模型,其中skip-gram训练代码已经给出,请实现CBOW的训练。

总之先把谷歌给的skip-gram代码先看一遍吧,与《word2vec原理推导与代码分析》不同,这次的实现明显规模小很多,只计算前50000个高频词:

vocabulary_size = 50000

def build_dataset(words):

count = [['UNK', -1]]

count.extend(collections.Counter(words).most_common(vocabulary_size - 1))

dictionary = dict()

for word, _ in count:

dictionary[word] = len(dictionary)

data = list()

unk_count = 0

for word in words:

if word in dictionary:

index = dictionary[word]

else:

index = 0 # dictionary['UNK']

unk_count = unk_count + 1

data.append(index)

count[0][1] = unk_count

reverse_dictionary = dict(zip(dictionary.values(), dictionary.keys()))

return data, count, dictionary, reverse_dictionary

data, count, dictionary, reverse_dictionary = build_dataset(words)

print('Most common words (+UNK)', count[:5])

print('Sample data', data[:10])

del words # Hint to reduce memory.

输出

Most common words (+UNK) [['UNK', 418391], ('the', 1061396), ('of', 593677), ('and', 416629), ('one', 411764)]

Sample data [5236, 3084, 12, 6, 195, 2, 3134, 46, 59, 156]

data: ['anarchism', 'originated', 'as', 'a', 'term', 'of', 'abuse', 'first']

dictionary是词到id的映射。

接下来生成skip-gram训练实例:

data_index = 0

def generate_batch(batch_size, num_skips, skip_window):

global data_index

assert batch_size % num_skips == 0

assert num_skips <= 2 * skip_window

batch = np.ndarray(shape=(batch_size), dtype=np.int32)

labels = np.ndarray(shape=(batch_size, 1), dtype=np.int32)

span = 2 * skip_window + 1 # [ skip_window target skip_window ]

buffer = collections.deque(maxlen=span)

for _ in range(span):

buffer.append(data[data_index])

data_index = (data_index + 1) % len(data)

for i in range(batch_size // num_skips):

target = skip_window # target label at the center of the buffer

targets_to_avoid = [skip_window]

for j in range(num_skips):

while target in targets_to_avoid:

target = random.randint(0, span - 1)

targets_to_avoid.append(target)

batch[i * num_skips + j] = buffer[skip_window]

labels[i * num_skips + j, 0] = buffer[target]

buffer.append(data[data_index])

data_index = (data_index + 1) % len(data)

return batch, labels

print('data:', [reverse_dictionary[di] for di in data[:8]])

for num_skips, skip_window in [(2, 1), (4, 2)]:

data_index = 0

batch, labels = generate_batch(batch_size=8, num_skips=num_skips, skip_window=skip_window)

print('\nwith num_skips = %d and skip_window = %d:' % (num_skips, skip_window))

print(' batch:', [reverse_dictionary[bi] for bi in batch])

print(' labels:', [reverse_dictionary[li] for li in labels.reshape(8)])

输出

with num_skips = 2 and skip_window = 1: batch: ['originated', 'originated', 'as', 'as', 'a', 'a', 'term', 'term'] labels: ['as', 'anarchism', 'originated', 'a', 'term', 'as', 'a', 'of'] with num_skips = 4 and skip_window = 2: batch: ['as', 'as', 'as', 'as', 'a', 'a', 'a', 'a'] labels: ['anarchism', 'term', 'originated', 'a', 'term', 'of', 'as', 'originated']

skip-gram是已知当前词语,预测其上下文。上面这个函数中,窗口大小为2 * skip_window + 1,针对每个窗口中心词语,生成num_skips个训练实例。实例的x是中心词语,y是随机采样的上下文词语(这个采样算法就是平均采样target = random.randint(0, span – 1),根本没有考虑到词频,负分差评),而batch_size控制最终生成多少个训练实例。

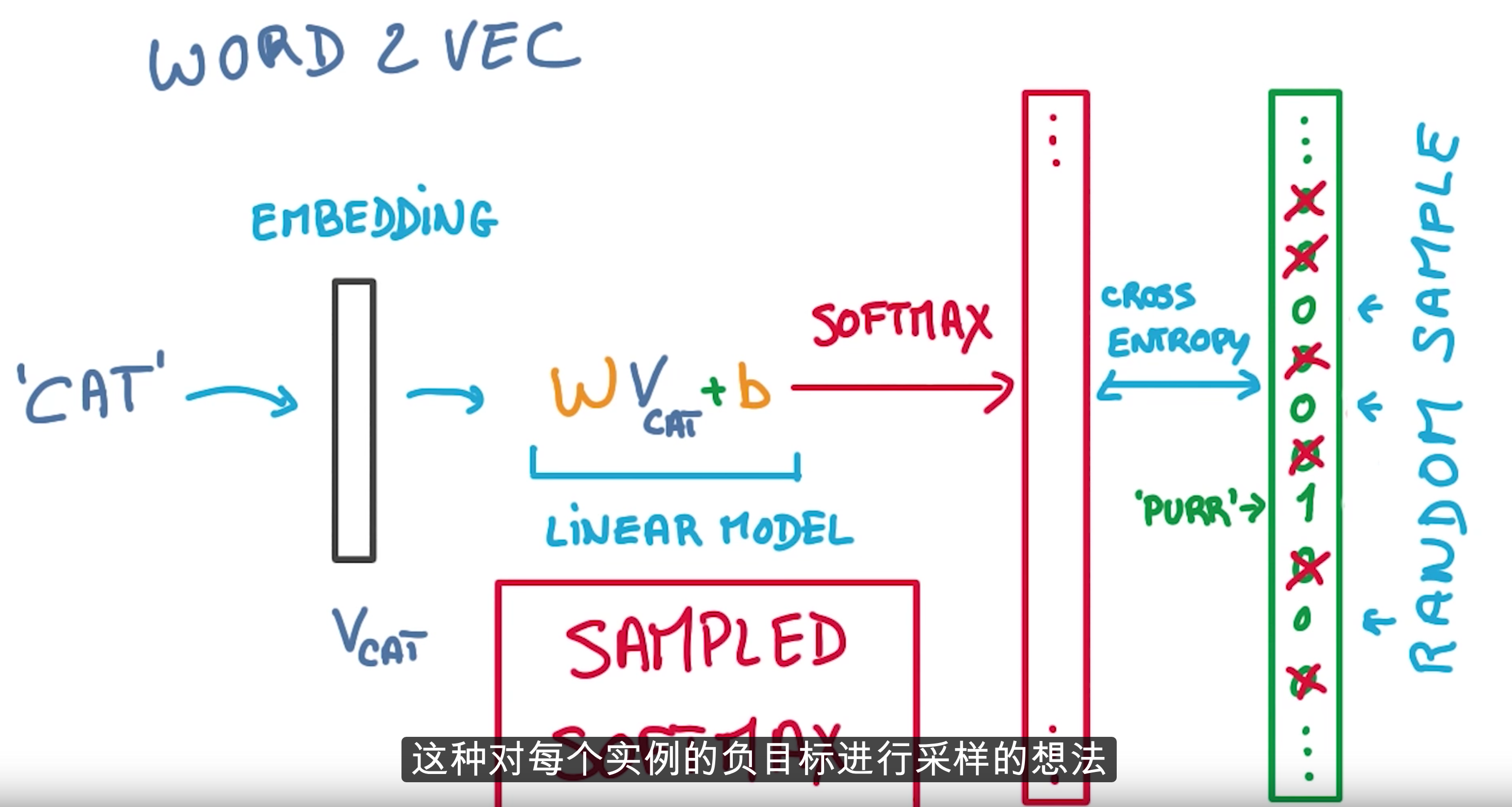

机器学习中的分类问题光给正例可不够,还得给负例。这里的负例通过负采样得到:

对应代码中的

num_sampled = 64 # Number of negative examples to sample. # Compute the softmax loss, using a sample of the negative labels each time. loss = tf.reduce_mean( tf.nn.sampled_softmax_loss(softmax_weights, softmax_biases, embed, train_labels, num_sampled, vocabulary_size))

这其实就是softmax逻辑斯谛回归,彻头彻尾的线性模型,一点都不深。

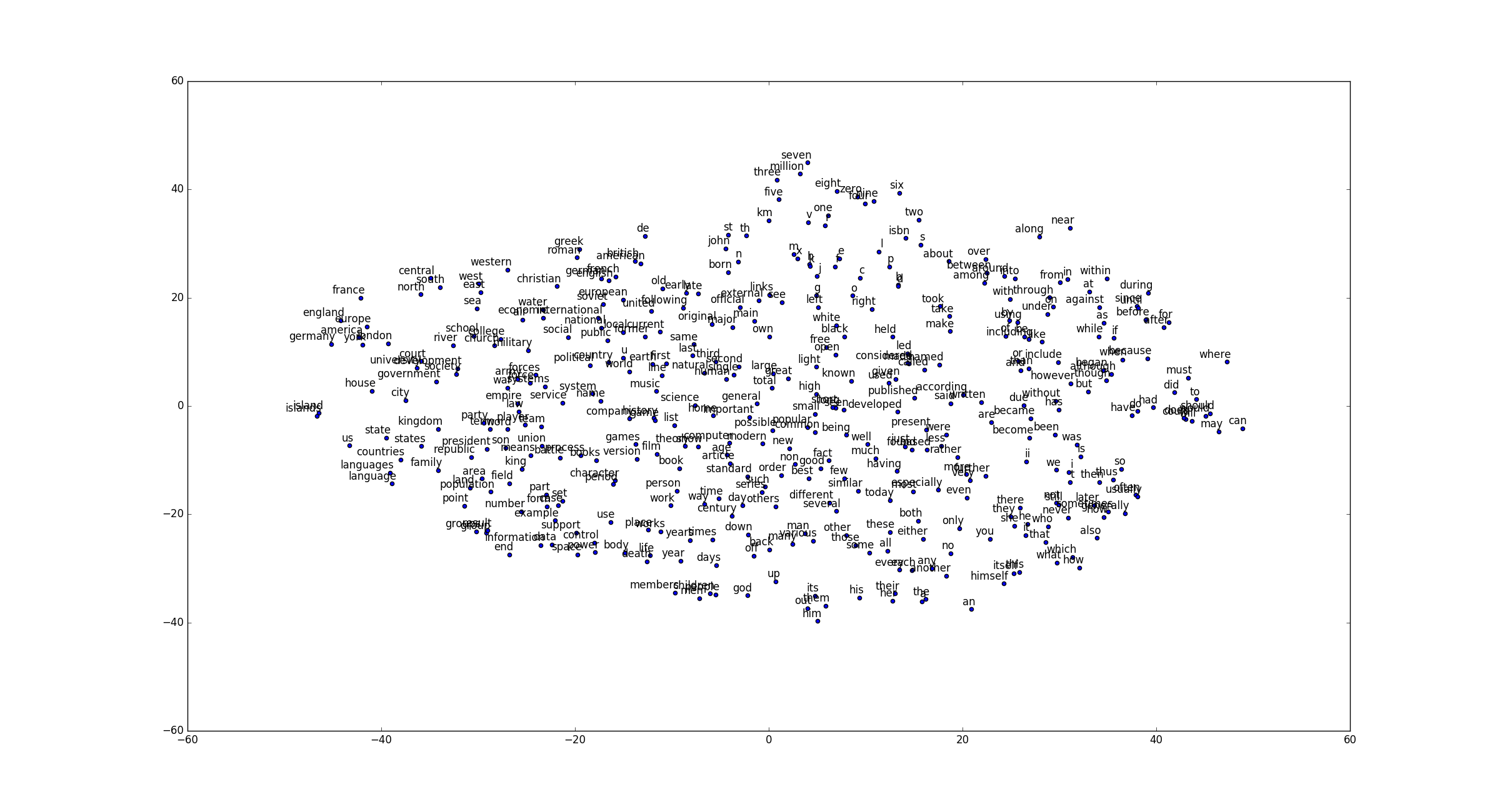

训练就是按照逻辑斯谛回归来,没什么好说的。得到embedding之后使用 t-SNE 可视化数据,据说PCA已经过气了。这一步得到:

题目

替代Skip-gram的另一种语言模型是CBOW,这是已知上下文预测当前词语的模型,请实现它。

生成训练实例

data_index = 0

def generate_batch(batch_size, bag_window):

global data_index

span = 2 * bag_window + 1 # [ bag_window target bag_window ]

batch = np.ndarray(shape=(batch_size, span - 1), dtype=np.int32)

labels = np.ndarray(shape=(batch_size, 1), dtype=np.int32)

buffer = collections.deque(maxlen=span)

for _ in range(span):

buffer.append(data[data_index])

data_index = (data_index + 1) % len(data)

for i in range(batch_size):

# just for testing

buffer_list = list(buffer)

labels[i, 0] = buffer_list.pop(bag_window)

batch[i] = buffer_list

# iterate to the next buffer

buffer.append(data[data_index])

data_index = (data_index + 1) % len(data)

return batch, labels

print('data:', [reverse_dictionary[di] for di in data[:16]])

for bag_window in [1, 2]:

data_index = 0

batch, labels = generate_batch(batch_size=4, bag_window=bag_window)

print('\nwith bag_window = %d:' % (bag_window))

print(' batch:', [[reverse_dictionary[w] for w in bi] for bi in batch])

print(' labels:', [reverse_dictionary[li] for li in labels.reshape(4)])

得到

data: ['anarchism', 'originated', 'as', 'a', 'term', 'of', 'abuse', 'first', 'used', 'against', 'early', 'working', 'class', 'radicals', 'including', 'the'] with bag_window = 1: batch: [['anarchism', 'as'], ['originated', 'a'], ['as', 'term'], ['a', 'of']] labels: ['originated', 'as', 'a', 'term'] with bag_window = 2: batch: [['anarchism', 'originated', 'a', 'term'], ['originated', 'as', 'term', 'of'], ['as', 'a', 'of', 'abuse'], ['a', 'term', 'abuse', 'first']] labels: ['as', 'a', 'term', 'of']

这次的输入x是多个词语(上下文),y依然是单个词语,依然需要负采样。

训练

完整的训练代码

batch_size = 128

embedding_size = 128 # Dimension of the embedding vector.

bag_window = 2 # How many words to consider left and right.

# We pick a random validation set to sample nearest neighbors. here we limit the

# validation samples to the words that have a low numeric ID, which by

# construction are also the most frequent.

valid_size = 16 # Random set of words to evaluate similarity on.

valid_window = 100 # Only pick dev samples in the head of the distribution.

valid_examples = np.array(random.sample(range(valid_window), valid_size))

num_sampled = 64 # Number of negative examples to sample.

graph = tf.Graph()

with graph.as_default(), tf.device('/cpu:0'):

# Input data.

train_dataset = tf.placeholder(tf.int32, shape=[batch_size, bag_window * 2])

train_labels = tf.placeholder(tf.int32, shape=[batch_size, 1])

valid_dataset = tf.constant(valid_examples, dtype=tf.int32)

# Variables.

embeddings = tf.Variable(

tf.random_uniform([vocabulary_size, embedding_size], -1.0, 1.0))

softmax_weights = tf.Variable(

tf.truncated_normal([vocabulary_size, embedding_size],

stddev=1.0 / math.sqrt(embedding_size)))

softmax_biases = tf.Variable(tf.zeros([vocabulary_size]))

# Model.

# Look up embeddings for inputs.

embeds = tf.nn.embedding_lookup(embeddings, train_dataset)

# Compute the softmax loss, using a sample of the negative labels each time.

loss = tf.reduce_mean(

tf.nn.sampled_softmax_loss(softmax_weights, softmax_biases, tf.reduce_sum(embeds, 1),

train_labels, num_sampled, vocabulary_size))

# Optimizer.

optimizer = tf.train.AdagradOptimizer(1.0).minimize(loss)

# Compute the similarity between minibatch examples and all embeddings.

# We use the cosine distance:

norm = tf.sqrt(tf.reduce_sum(tf.square(embeddings), 1, keep_dims=True))

normalized_embeddings = embeddings / norm

valid_embeddings = tf.nn.embedding_lookup(

normalized_embeddings, valid_dataset)

similarity = tf.matmul(valid_embeddings, tf.transpose(normalized_embeddings))

num_steps = 100001

with tf.Session(graph=graph) as session:

tf.initialize_all_variables().run()

print('Initialized')

average_loss = 0

for step in range(num_steps):

batch_data, batch_labels = generate_batch(

batch_size, bag_window)

feed_dict = {train_dataset: batch_data, train_labels: batch_labels}

_, l = session.run([optimizer, loss], feed_dict=feed_dict)

average_loss += l

if step % 2000 == 0:

if step > 0:

average_loss = average_loss / 2000

# The average loss is an estimate of the loss over the last 2000 batches.

print('Average loss at step %d: %f' % (step, average_loss))

average_loss = 0

# note that this is expensive (~20% slowdown if computed every 500 steps)

if step % 10000 == 0:

sim = similarity.eval()

for i in range(valid_size):

valid_word = reverse_dictionary[valid_examples[i]]

top_k = 8 # number of nearest neighbors

nearest = (-sim[i, :]).argsort()[1:top_k + 1]

log = 'Nearest to %s:' % valid_word

for k in range(top_k):

close_word = reverse_dictionary[nearest[k]]

log = '%s %s,' % (log, close_word)

print(log)

final_embeddings = normalized_embeddings.eval()

num_points = 400

tsne = TSNE(perplexity=30, n_components=2, init='pca', n_iter=5000)

two_d_embeddings = tsne.fit_transform(final_embeddings[1:num_points + 1, :])

def plot(embeddings, labels):

assert embeddings.shape[0] >= len(labels), 'More labels than embeddings'

pylab.figure(figsize=(15, 15)) # in inches

for i, label in enumerate(labels):

x, y = embeddings[i, :]

pylab.scatter(x, y)

pylab.annotate(label, xy=(x, y), xytext=(5, 2), textcoords='offset points',

ha='right', va='bottom')

pylab.show()

words = [reverse_dictionary[i] for i in range(1, num_points + 1)]

plot(two_d_embeddings, words)

与skip-gram的区别无非是输入的不同而已,在skip-gram中,输入是一个向量;而在CBOW输入是多个向量:

train_dataset = tf.placeholder(tf.int32, shape=[batch_size, bag_window * 2]) ... embeds = tf.nn.embedding_lookup(embeddings, train_dataset)

在输入softmax的时候进行了一次求和,得到单个向量:

loss = tf.reduce_mean( tf.nn.sampled_softmax_loss(softmax_weights, softmax_biases, tf.reduce_sum(embeds, 1), train_labels, num_sampled, vocabulary_size))

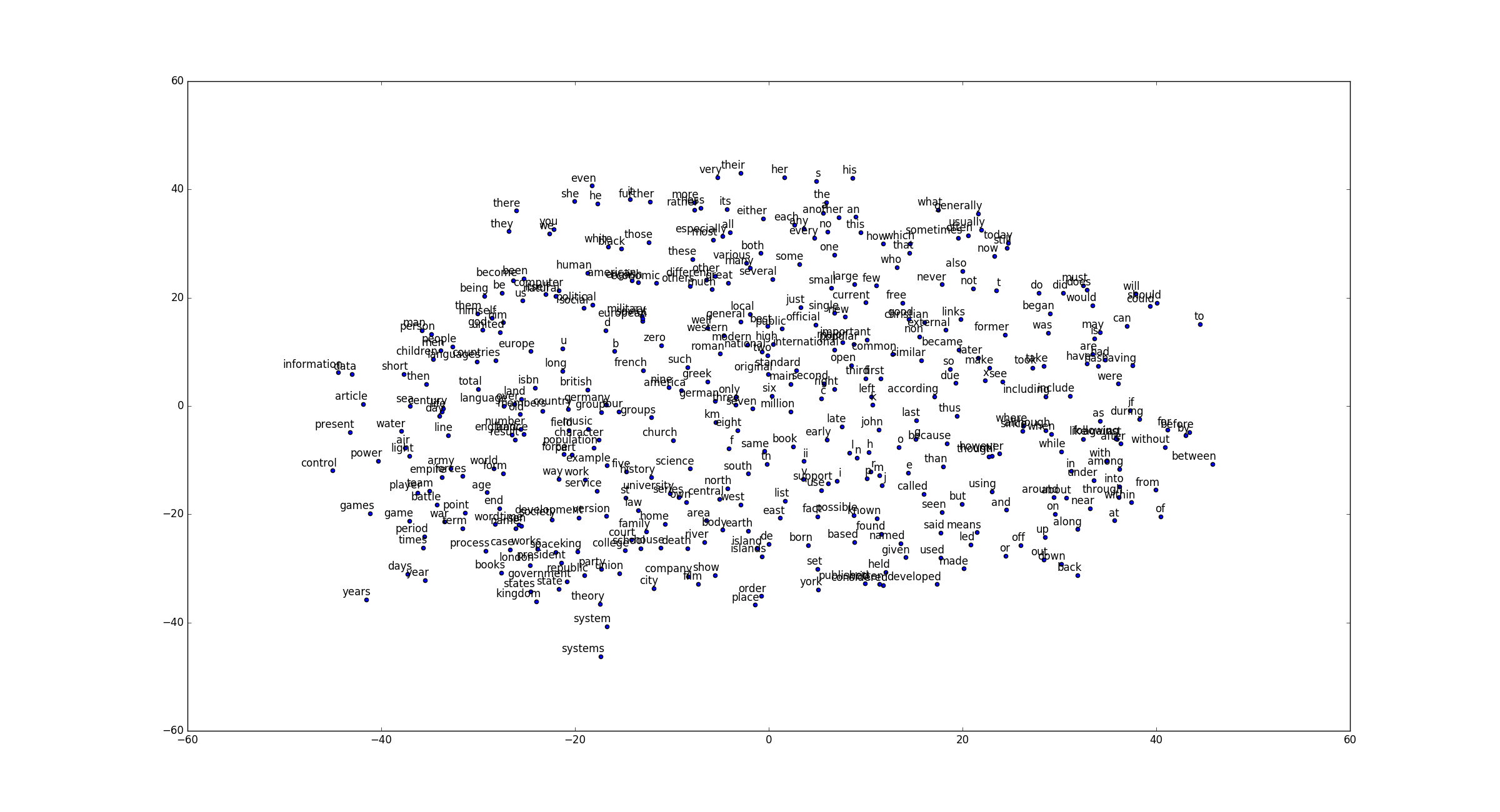

可视化

CBOW得到

直观上CBOW似乎要好一些。

Reference

https://github.com/Arn-O/udacity-deep-learning/blob/master/5_word2vec.ipynb

码农场

码农场

纠正一个错误蛤,这里的word2vec中负采样是考虑到了词频的。sampled_softmax_loss中就又实现。

谢谢博主发布的这篇文章。感觉udacity上的教程讲到这里太模糊了。有一个疑问望不吝赐教。就是在产生数据集的时候,源码中的num_skips和skip_window是在干嘛?不太明白这样产生训练数据的意义是什么。。