课上浅显地介绍了卷积网络,以及配套的常用技巧。速成嘛,没深入探讨原理。编程任务直接给出了实现,要求也只是在其基础上做些小改进,过过干瘾。

任务 4: 卷积模型

设计并训练一个卷积神经网络。

前两次任务中,我们实现了较深的全连接多层神经网络,这次的目标是加入卷积层。

为了降低难度,谷歌直接给出了范例代码,也就是两个卷积层的神经网络:

image_size = 28

num_labels = 10

num_channels = 1 # grayscale

batch_size = 16

patch_size = 5

depth = 16

num_hidden = 64

graph = tf.Graph()

with graph.as_default():

# Input data.

tf_train_dataset = tf.placeholder(

tf.float32, shape=(batch_size, image_size, image_size, num_channels))

tf_train_labels = tf.placeholder(tf.float32, shape=(batch_size, num_labels))

tf_valid_dataset = tf.constant(valid_dataset)

tf_test_dataset = tf.constant(test_dataset)

# Variables.

layer1_weights = tf.Variable(tf.truncated_normal([patch_size, patch_size, num_channels, depth], stddev=0.1))

layer1_biases = tf.Variable(tf.zeros([depth]))

layer2_weights = tf.Variable(tf.truncated_normal([patch_size, patch_size, depth, depth], stddev=0.1))

layer2_biases = tf.Variable(tf.constant(1.0, shape=[depth]))

layer3_weights = tf.Variable(

tf.truncated_normal([image_size // 4 * image_size // 4 * depth, num_hidden], stddev=0.1))

layer3_biases = tf.Variable(tf.constant(1.0, shape=[num_hidden]))

layer4_weights = tf.Variable(tf.truncated_normal([num_hidden, num_labels], stddev=0.1))

layer4_biases = tf.Variable(tf.constant(1.0, shape=[num_labels]))

# Model.

def model(data):

conv = tf.nn.conv2d(data, layer1_weights, [1, 2, 2, 1], padding='SAME')

hidden = tf.nn.relu(conv + layer1_biases)

conv = tf.nn.conv2d(hidden, layer2_weights, [1, 2, 2, 1], padding='SAME')

hidden = tf.nn.relu(conv + layer2_biases)

shape = hidden.get_shape().as_list()

reshape = tf.reshape(hidden, [shape[0], shape[1] * shape[2] * shape[3]])

hidden = tf.nn.relu(tf.matmul(reshape, layer3_weights) + layer3_biases)

return tf.matmul(hidden, layer4_weights) + layer4_biases

# Training computation.

logits = model(tf_train_dataset)

loss = tf.reduce_mean(

tf.nn.softmax_cross_entropy_with_logits(logits, tf_train_labels))

# Optimizer.

optimizer = tf.train.GradientDescentOptimizer(0.05).minimize(loss)

# Predictions for the training, validation, and test data.

train_prediction = tf.nn.softmax(logits)

valid_prediction = tf.nn.softmax(model(tf_valid_dataset))

test_prediction = tf.nn.softmax(model(tf_test_dataset))

num_steps = 1001

with tf.Session(graph=graph) as session:

tf.initialize_all_variables().run()

print('Initialized')

for step in range(num_steps):

offset = (step * batch_size) % (train_labels.shape[0] - batch_size)

batch_data = train_dataset[offset:(offset + batch_size), :, :, :]

batch_labels = train_labels[offset:(offset + batch_size), :]

feed_dict = {tf_train_dataset: batch_data, tf_train_labels: batch_labels}

_, l, predictions = session.run(

[optimizer, loss, train_prediction], feed_dict=feed_dict)

if (step % 50 == 0):

print('Minibatch loss at step %d: %f' % (step, l))

print('Minibatch accuracy: %.1f%%' % accuracy(predictions, batch_labels))

print('Validation accuracy: %.1f%%' % accuracy(

valid_prediction.eval(), valid_labels))

print('Original Test accuracy: %.1f%%' % accuracy(test_prediction.eval(), test_labels))

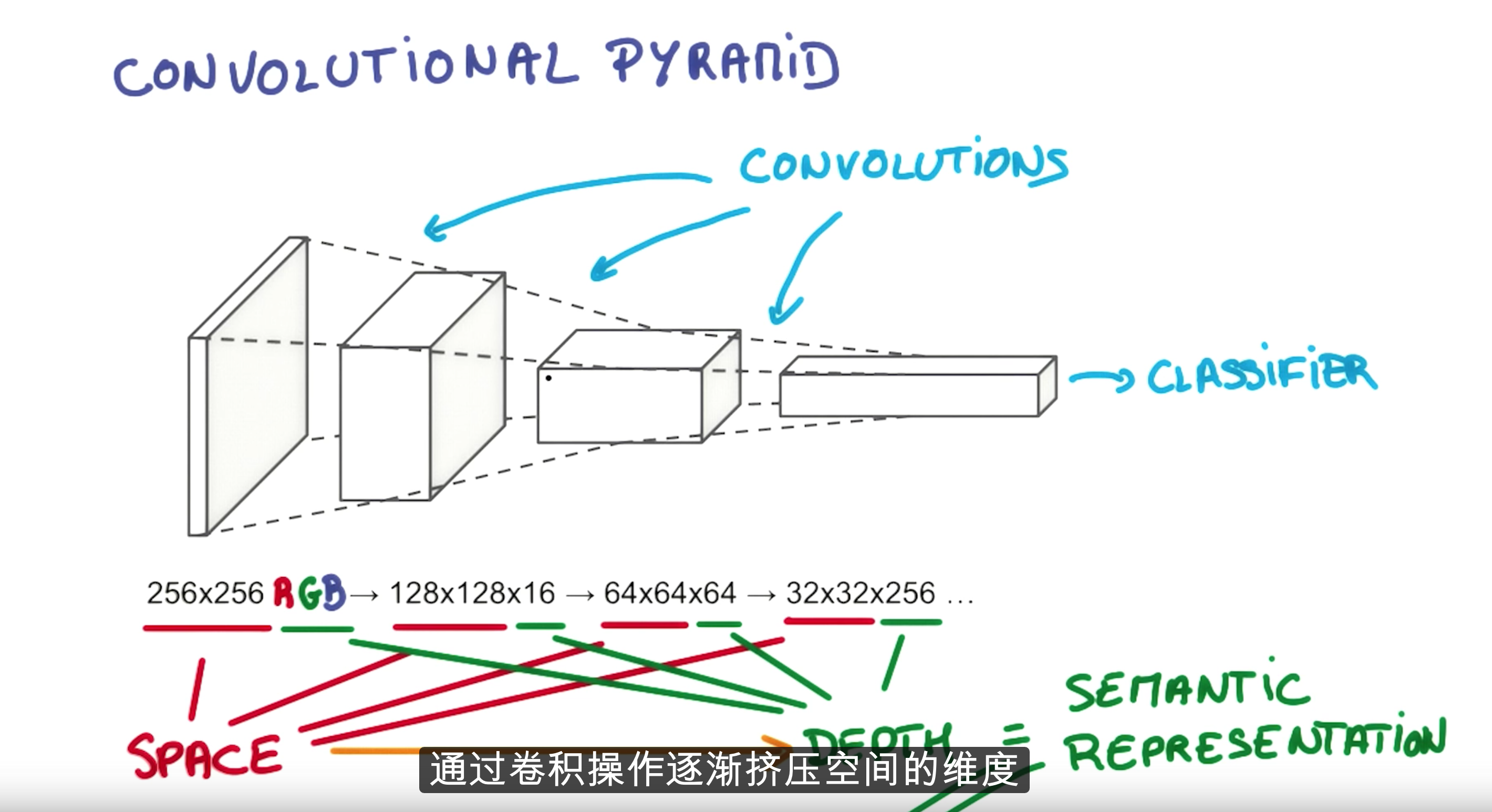

其中layer1和2是卷积层,layer3是隐藏层,layer4是输出层。网络结构类似lecture里面的范例:

上图有3个卷积层,depth变化是3->16->64->256。

在实例代码中,隐藏层和输出层没什么稀奇的,重点关注前两个卷积层。layer1_weights的大小是

[patch_size, patch_size, num_channels, depth]

表示layer1的目的是将28*28的图像空间压缩为patch_size*patch_size,但每个像素的通道由num_channels“变厚”为depth,同一个patch内的像素点应该共享一套参数。

同理,layer2_weights的大小是

[patch_size, patch_size, depth, depth]

虽然空间大小和深度都没有变化,但旧空间中的一个块被map到新空间中的一个点,肯定又发生了一次抽象。这个卷积层不是摆设。

在session中,

conv = tf.nn.conv2d(data, layer1_weights, [1, 2, 2, 1], padding='SAME') hidden = tf.nn.relu(conv + layer1_biases) conv = tf.nn.conv2d(hidden, layer2_weights, [1, 2, 2, 1], padding='SAME') hidden = tf.nn.relu(conv + layer2_biases)

执行卷积操作,达到目的。

其中,第三个参数表示stride=2,参考官方文档:

In detail, with the default NHWC format,

output[b, i, j, k] = sum_{di, dj, q} input[b, strides[1] * i + di, strides[2] * j + dj, q] * filter[di, dj, q, k]Must have strides[0] = strides[3] = 1. For the most common case of the same horizontal and vertices strides, strides = [1, stride, stride, 1].

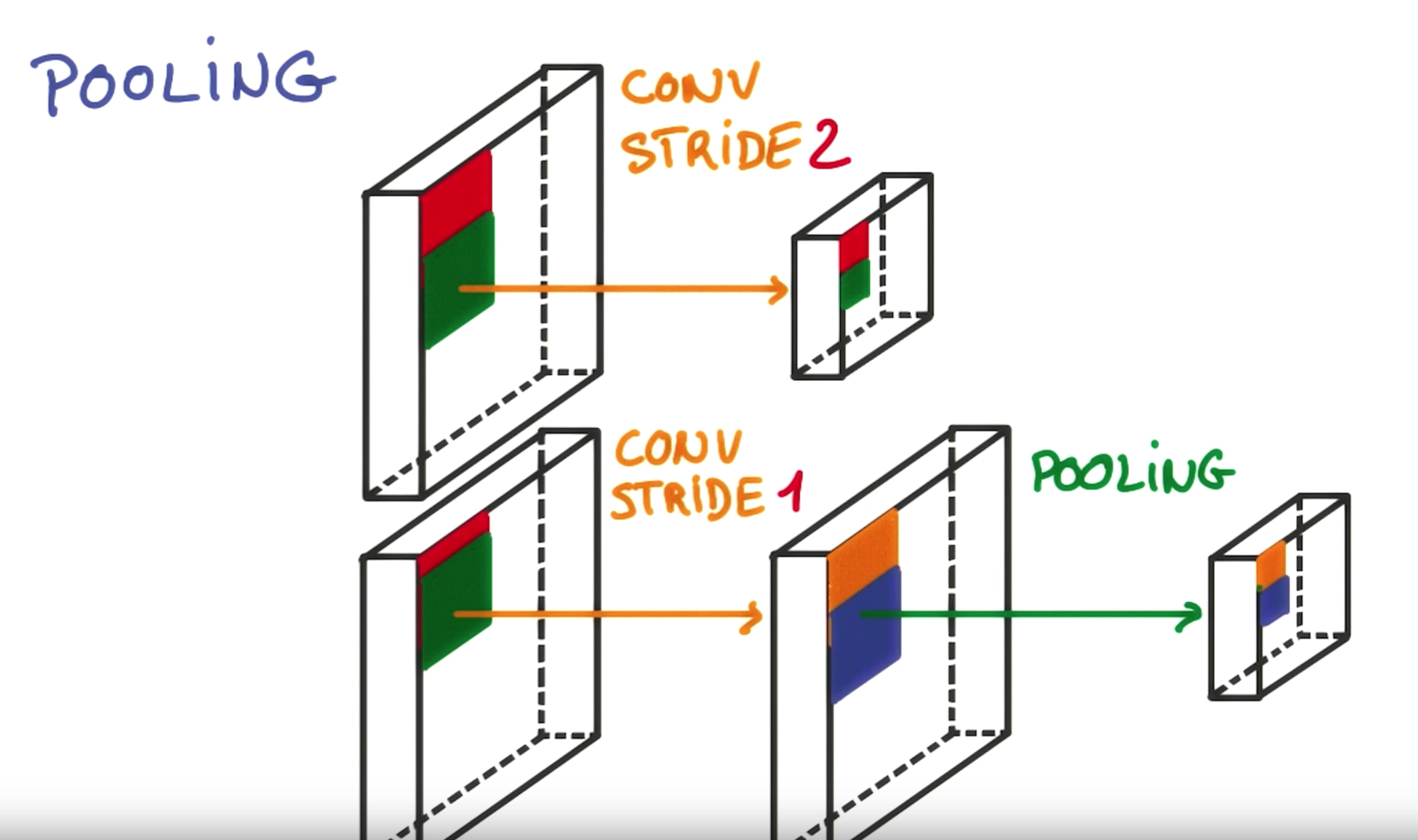

这个卷积操作应该类似下图(每次移动stride=2):

执行后得到:

Original Test accuracy: 89.1%

有很大改进空间。

题目1

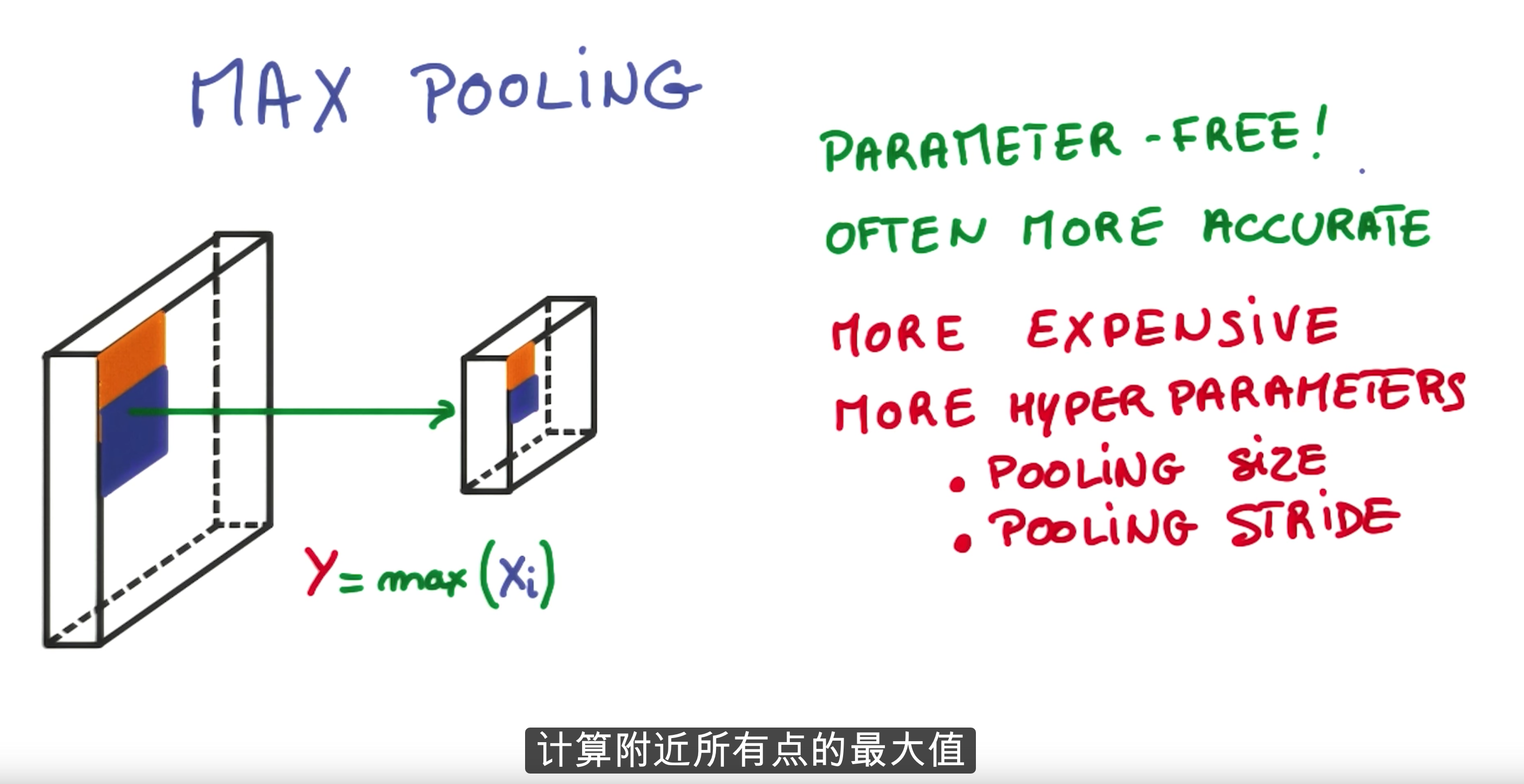

使用kernel=2的max pooling代替上面的stride。

stride=2跳过了两行或两列像素,这是一种激进的方法(优达学城翻译成“非常有效的方法”,肯定不对),因为这种采样策略损失了不少信息。max pooling的大意是取每个点周围的最大值,具体内部怎么实现我也不猜了,反正这就是个快餐教程,以后肯定要看更务实的课程。

将

conv = tf.nn.conv2d(data, layer1_weights, [1, 2, 2, 1], padding='SAME') hidden = tf.nn.relu(conv + layer1_biases) conv = tf.nn.conv2d(hidden, layer2_weights, [1, 2, 2, 1], padding='SAME') hidden = tf.nn.relu(conv + layer2_biases)

替换为:

conv1 = tf.nn.relu(tf.nn.conv2d(data, layer1_weights, [1, 1, 1, 1], padding='SAME') + layer1_biases) pool1 = tf.nn.max_pool(conv1, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME') conv2 = tf.nn.relu(tf.nn.conv2d(pool1, layer2_weights, [1, 1, 1, 1], padding='SAME') + layer2_biases) pool2 = tf.nn.max_pool(conv2, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME')

输出:

Max pool Test accuracy: 89.6%

略有改善。注意这里

num_steps = 1001

所以跟上次任务没什么可比性。卷积神经网络训练太费时了,上次烧了两壶水,这次是BBQ。

题目2

参考经典的LeNet-5,

用Dropout、学习率递减等技巧来改善卷积网络。

LeNet-5不含输入层的话有7层,完全模仿太复杂了,即使写出来估计也跑不动。直接加个正则化、dropout和指数递减快点完事吧。

Dropout

在隐藏层的输出上加个Dropout:

fc1_drop = tf.nn.dropout(fc1, keep_prob)

正则化

在损失函数上加个beta=0.001的正则项:

loss = cross_entropy + 0.001 * ( tf.nn.l2_loss(layer3_weights) + tf.nn.l2_loss(layer3_biases) + tf.nn.l2_loss(layer4_weights) + tf.nn.l2_loss( layer4_biases))

学习率

optimizer加个学习率递减:

learning_rate = tf.train.exponential_decay(1e-1, global_step, num_steps, 0.7, staircase=True) optimizer = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step)

输出:

Lenet 5 Test accuracy: 94.6%

Reference:

https://arxiv.org/pdf/1603.07285v1.pdf

https://github.com/vdumoulin/conv_arithmetic

码农场

码农场